IT4Innovations operates large supercomputers and also smaller complementary systems. They represent emerging, non-traditional, and highly specialised hardware architectures that are rather uncommon in supercomputing centres. Complementary systems have been launched at IT4Innovations, and new programming models, libraries, and application development tools are being deployed in these systems to extract the maximum possible performance from this hardware. Complementary systems thus allow research teams to test and compare experimental architectures with traditional ones (e.g., x86 + Nvidia GPUs), and optimise and accelerate computations in new research areas.

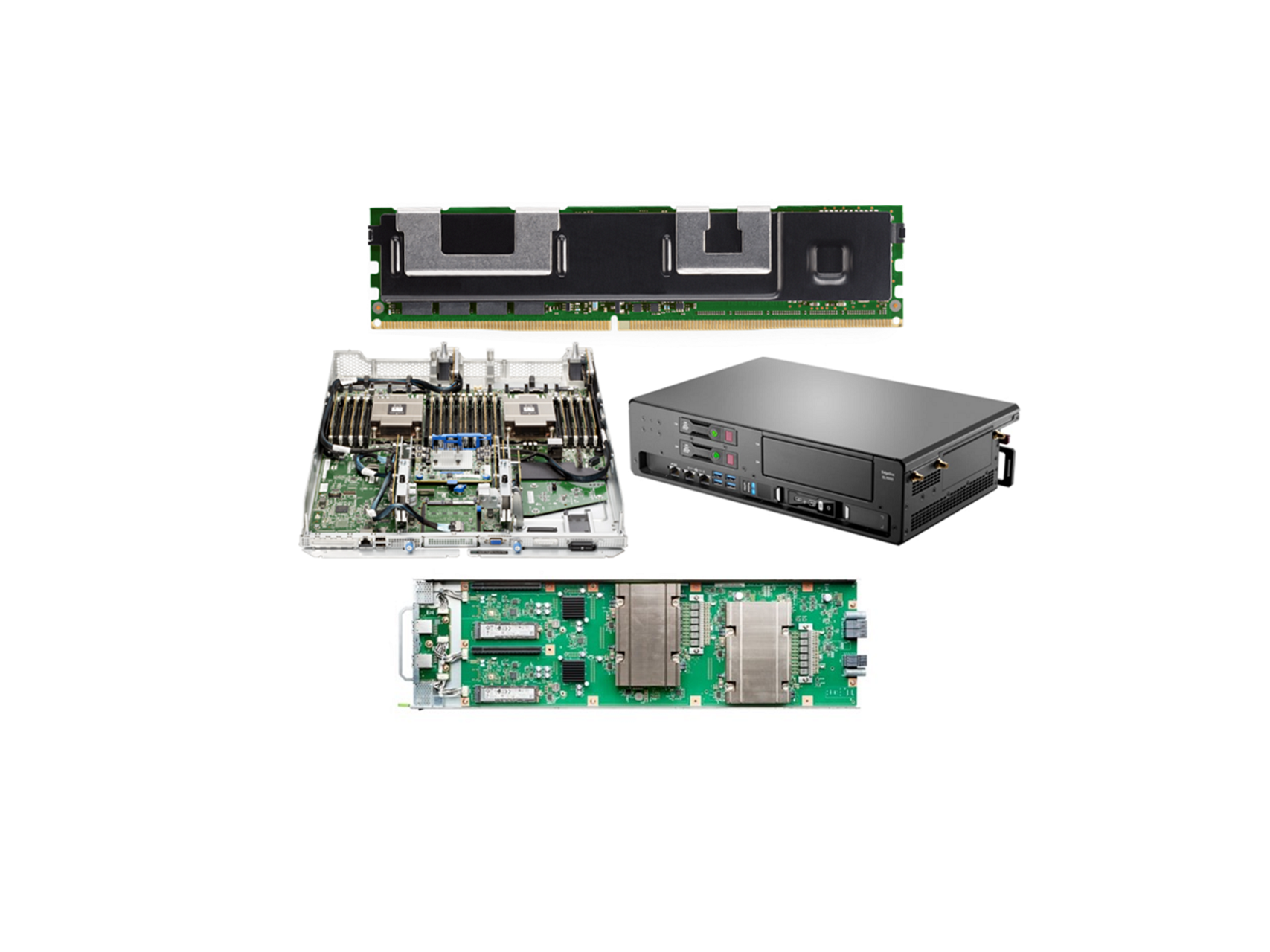

Complementary systems consist of several parts. All of these parts are built on servers from Hewlett Packard Enterprise and were supplied and implemented by M Computers from Brno, the Czech Republic. Complementary systems project incorporates the latest and most discussed experimental HPC and AI platforms. Some of them represent the first-ever deliveries of these technologies to the Czech Republic. The technical specification of complementary systems:

Compute partition 1 – Arm A64FX processors

The compute nodes of the first part of complementary systems are built on Arm A64FX processors with integrated fast HBM2 memory. This is essentially a facsimile of part of the world’s most powerful supercomputer in recent years, Fugaku, installed at the RIKEN Center of Computational Science in Japan (currently the second most powerful supercomputer). The configuration consists of eight HPE Apollo 80 compute nodes interconnected by a 100Gb/s Infiniband network.

Compute partition 2 – Intel processors, Intel PMEM

The compute nodes in this part of the complementary systems are based on Intel technologies. The servers are equipped with third-generation Intel Xeon processors, persistent (non-volatile) Intel Optane memory with a total capacity of 2TB and 8TB per server. This part consists of two HPE ProLiant DL380 Gen 10 Plus nodes.

Compute partition 3 – AMD processors, AMD accelerators, AMD FPGA (Xilinx)

The third part of the complementary systems is built on AMD technologies. The servers are equipped with third-generation AMD EPYC processors, four AMD Instinct MI100 GPU cards interconnected by a fast bus (AMD Infinity Fabric), and two Xilinx Alveo FPGA cards with differing performance. Xilinx is one of AMD’s latest significant acquisitions. This part consists of two HPE Apollo 6500 Gen 10+ nodes.

Compute partition 4 – Edge server

Complementary systems also include the HPE EL1000 edge server, designed to process AI jobs directly at the data source, often outside the data centre. The server has high computing power for AI inference thanks to the NVIDIA Tesla T4 GPU accelerator, several technologies for communication (10Gb Ethernet, Wifi, LTE), and low power consumption.

Network Infrastructure

The interconnection of the individual nodes of the complementary systems is provided by the high-speed, low-latency Infiniband HDR interconnection network, built on an Nvidia/Mellanox switch with forty ports and a speed of up to 200 Gb/s. The infrastructure also includes a 10Gb Ethernet network.

Software

An important part of complementary systems is software, which includes environments, compilers, numerical libraries, and algorithm development and debugging tools.

The HPE Cray Programming Environment is a comprehensive tool for developing HPC applications in a heterogeneous environment. It supports all complementary systems architectures, and includes optimised libraries, support for the most widely used programming languages, and several tools for analysing, debugging, and optimising parallel algorithms.

Intel oneAPI is a tool for developing applications deployed on heterogeneous platforms – CPU, GPU, and FPGA. It is planned to be used primarily for FPGA cards in the complementary systems.

AMD ROCm is a software package that includes programming models, development tools, libraries, and integration tools for the most widely used AI frameworks that run on top of AMD GPU accelerators.

Complementary systems are operational, for more technical details see the IT4Innovations website or the detailed documentation and find here how to obtain access to the computational resources of IT4Innovations National Supercomputing Center.